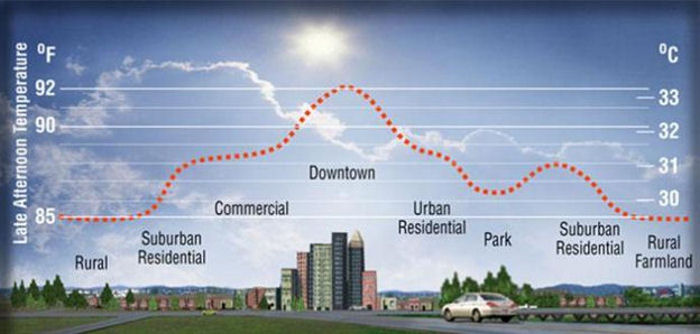

An Urban Heat Island (UHI) is a localized area, typically in a city, where temperatures are significantly higher than the surrounding rural areas due to human activities and modifications of the landscape. This phenomenon is caused by factors such as increased impervious surfaces, limited vegetation, and the heat generated from buildings and vehicles.

U.S. EPA--https://www.nsf.gov/news/mmg/mmg_disp.jsp?med_id=75857&from=mn

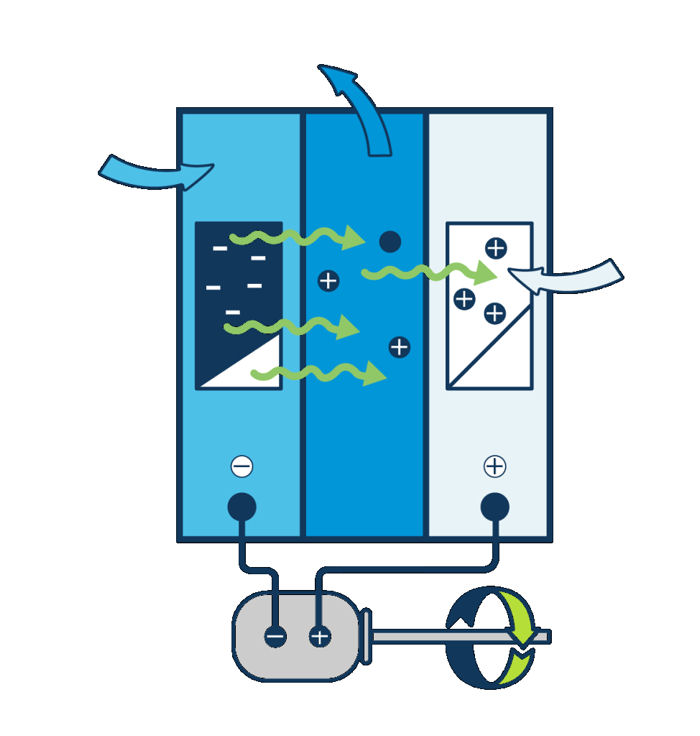

A heat wave is essentially a 3-5°F temperature anomaly from regional climatology which persists for 2 or more days, as discussed in the previous commentary. Conditions in an urban heat island are essentially a virtually continuous “heat wave” relative to the current temperatures in the rural areas surrounding the urban area. The figure above illustrates the temperature impact of varying levels of development within an urban heat island. Tall, dense infrastructure and large paved areas are major contributors to urban heating.

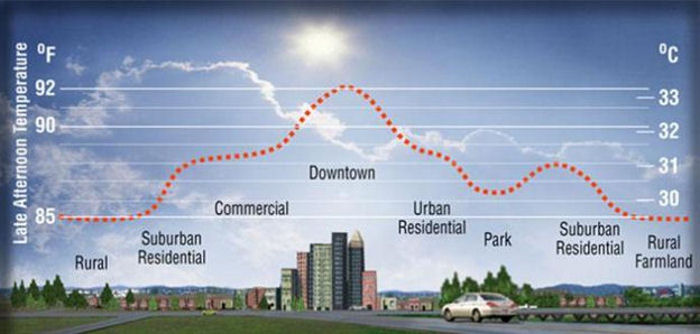

One of the suggested responses to climate change is the development of “15-minute cities”, to minimize personal vehicle travel and encourage walking and the use of bicycles and public transit to move around the cities. These cities would offer the normal services residents require including medical and dental offices, emergency care facilities, schools, retail stores, health clubs, etc. To support the services in the 15-minute cities, the population density of these cities would have to be several times the population density of typical large cities; and, population density increases the UHI effect.

The photo below is an example of a city environment required to support high population density. The tall, dense buildings effectively block the wind and cause the heat collected and generated in the city to be trapped.

Detailed attention to building spacing and orientation, building construction, and the inclusion of green spaces would be essential to prevent such very densely populated cities from becoming even more intense urban heat islands.

If climate change is increasing the frequency and/or intensity of heat waves, as is suggested by IPCC AR6, then an increase in city size and population density, as would be the case with 15-minute cities, would appear to be a step in the wrong direction from the standpoints of public health and safety, particularly during the summer months. The normal elevation of temperature caused by the UHI effect would increase the temperatures in the areas surrounding the cities, as shown in the first graphic above. The temperatures in the cities and in the regions surrounding the cities would be further increased by the occurrence of heat waves in the regions. The cities would tend to be less affected by cold waves because of their internal heat gains and the effects of wind blocking.

There are also significant sociological issues with very high population density developments. The US experience with such developments, frequently referred to as “projects”, has not been positive, though most of this experience is with rental properties rather than owned residences.

The Right Insight is looking for writers who are qualified in our content areas. Learn More...

The Right Insight is looking for writers who are qualified in our content areas. Learn More...